OpenAI has moved past the initial GPT-5 launch to GPT-5.3 Instant and GPT-5.4 Thinking for general users. More importantly, it released GPT-5.4-Cyber, a specialized variant for defensive cybersecurity. It lowers refusal boundaries for approved researchers to allow binary reverse engineering and malware analysis. The shift marks a move from one-size-fits-all models to high-capability expert variants.

Article:

The GPT-5 you waited for is already old news. OpenAI is now shipping GPT-5.4 Thinking as the default for hard tasks, with GPT-5.3 Instant handling quick turns.

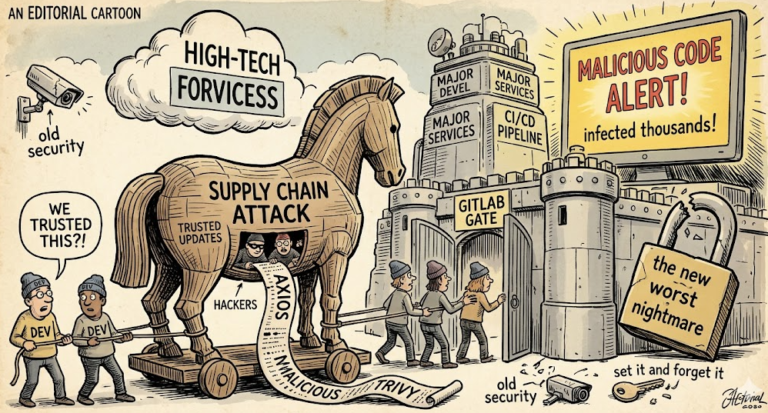

But the real story is the new “Cyber” variant. GPT-5.4-Cyber is built for defenders, and it plays by different rules. For vetted security teams, it dials down refusals so you can actually dissect malware, trace exploits, and reverse engineer binaries.

Why it matters is simple. General models are tuned to say no a lot, which is good for safety but painful for incident response. A SOC analyst doesn’t need a lecture, they need a disassembly walkthrough and a patch suggestion, fast.

The model is not a free-for-all. Access is gated, logged, and scoped to defensive use cases. OpenAI is framing it as a controlled capability, not a public feature. That distinction is why this launch feels different.

Interestingly enough, this is part of a broader split. Instead of one giant model for everyone, OpenAI is shipping experts. You get a thinking model for reasoning, an instant model for speed, and now a cyber model for security work.

The technical detail matters here. GPT-5.4-Cyber keeps strong safeguards for general users but allows deeper analysis primitives for approved orgs. That includes better handling of assembly, memory dumps, and exploit chains, plus improved tool use for sandboxes.

Early feedback from red teams is that it cuts investigation time. Instead of bouncing between five tools, they stay in one chat, pivot from log analysis to code review to remediation steps. That’s the workflow win OpenAI is chasing.

And yes, it’s a double-edged sword. More permissive models are powerful in the right hands and risky in the wrong ones. That’s why the gating, auditing, and coalition work with security vendors is part of the story, not an afterthought.

So the era of one-size-fits-all is over. GPT-4o suddenly feels like a calculator next to these specialized variants. If you’re in security, this is the model you’ve been asking for. If you’re not, expect more “expert” versions to show up in your domain soon.