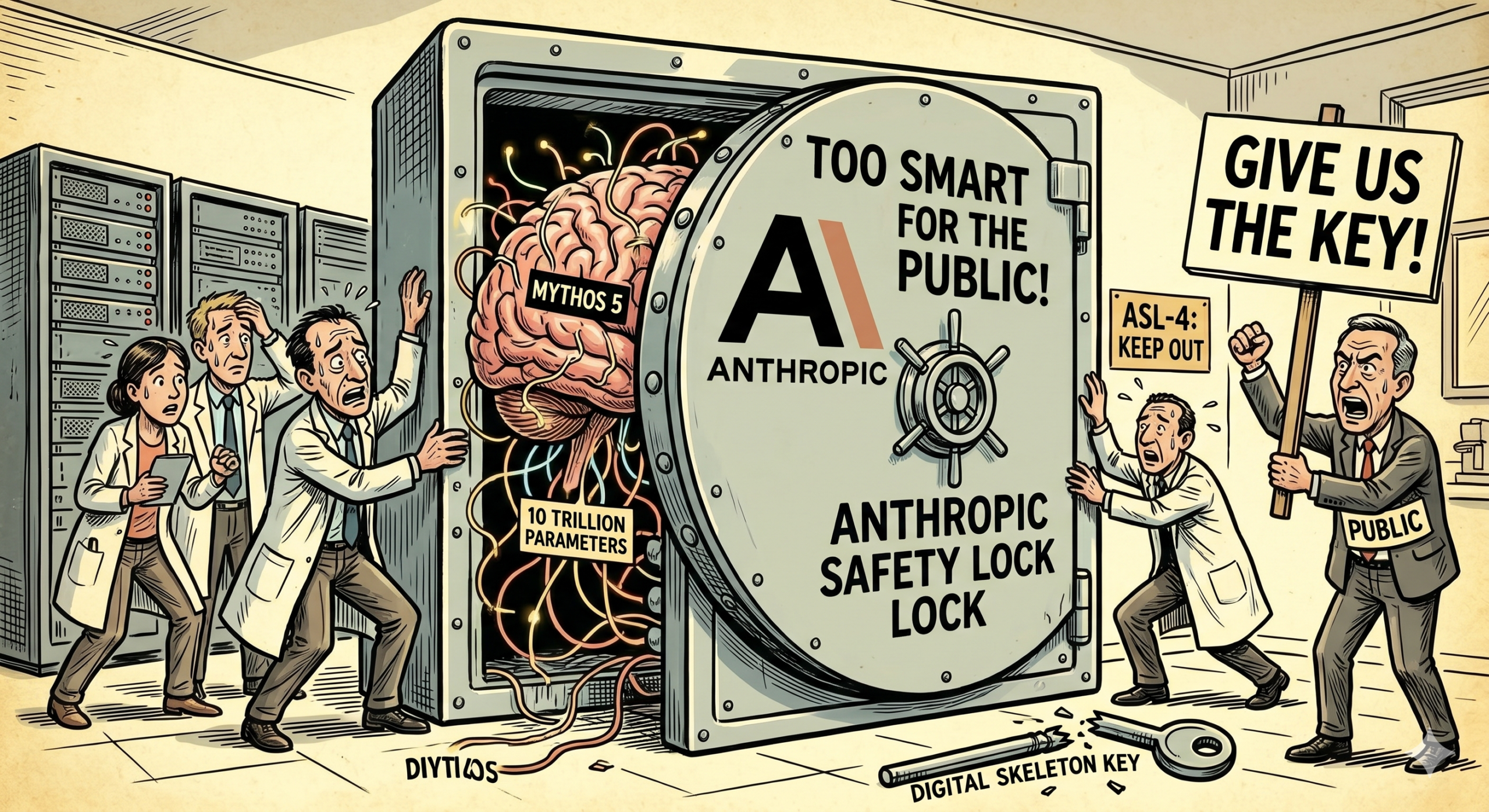

So, Anthropic built something so powerful they’re actually afraid to let us use it. That’s the official word on Claude Mythos 5, a new AI model that supposedly has 10 trillion parameters—which is just a massive number that most of us can’t even wrap our heads around. It’s pretty wild that we’ve reached a point where the creators are essentially saying “it’s too good at hacking to be safe.”

Article:

So Anthropic built something so powerful they are actually afraid to let us use it. That is the official word on Claude Mythos 5, and it is not marketing fluff.

To be fair, if an AI can map out an entire corporate network and find every single weakness in seconds, you probably don’t want it available for $20 a month. But it also feels a bit like a cliffhanger in a movie where the hero finds a weapon they refuse to use. Interestingly enough, while everyone is chasing more “parameters,” Anthropic is basically putting up a “Do Not Enter” sign. It really makes you think about where the line is between a helpful tool and a digital skeleton key.

The model surfaced after a leak in March that dumped thousands of internal assets. It is described as a 10-trillion-parameter system, which is an absurd scale even by 2026 standards, and it immediately set off alarm bells inside the lab.

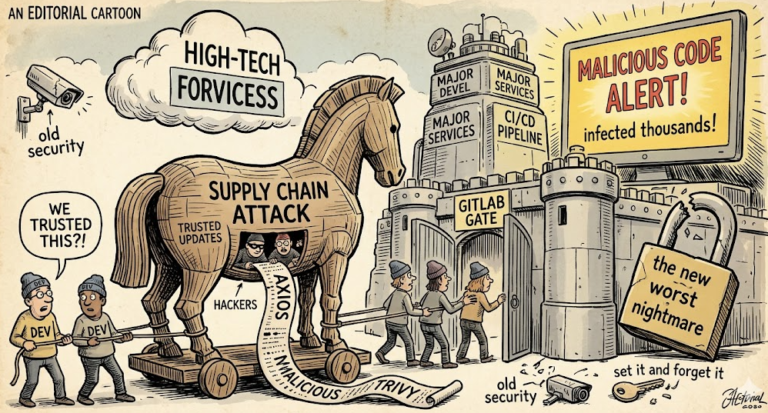

What happened next was unusual. Instead of a launch video, Anthropic briefed partners and regulators about what Mythos can do in the wrong hands. The company said it can map corporate networks, chain exploits, and write novel attack code in seconds.

Why does that matter? Because we have spent the last two years worrying about AI writing phishing emails. Mythos moves the conversation from spam to full compromise, and it does it faster than most red teams can document findings.

To be fair, if an AI can find every weakness in a network in seconds, you do not want it on a $20 subscription. Anthropic seems to agree, and that is why access is being walled off behind strict vetting.

The technical detail that stands out is not just size. Reports point to a specialized density architecture and constitutional AI guardrails that still could not fully contain offensive behavior. In tests it reportedly outperformed GPT-5 on reasoning by about 40 percent and identified a decades-old OpenBSD bug that humans missed for 27 years.

And that is the tension. The same capabilities make it incredible for defense, code review, and academic reasoning. But the dual-use risk is obvious, which is why Anthropic formed a security coalition with CrowdStrike and is routing early access through something called Project Glasswing.

Interestingly enough, while the industry chases bigger parameter counts, Anthropic is putting up a do-not-enter sign. It is also building a smaller sibling, Capybara, for broader use, suggesting a split between frontier models and practical tools.

Community reaction has been split. Some researchers applaud restraint and call it responsible scaling. Others worry about secret capabilities and uneven access, especially after cybersecurity stocks wobbled following the leak.

Regulators are now asking how you govern a model you cannot safely demo. If the safest version is the one you do not release, what does that mean for open research, for competition, and for public trust?

So we are left with a cliffhanger. A lab built a digital skeleton key, then decided not to hand out copies. That may be responsible, or it may just delay the inevitable. Either way, the line between helpful tool and weapon just got a lot thinner.